A Look At Docker & Containers Technology

Docker & Containers Technology

Docker & Containers Technology

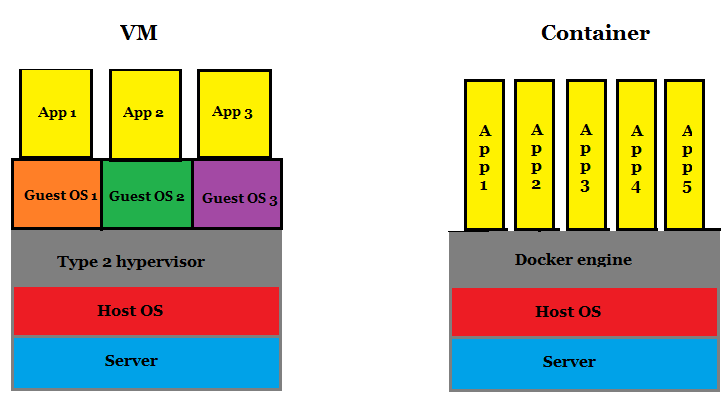

Virtualisation is the process of running virtual instances of computer systems, abstracted from the actual underlying hardware. You can have multiple Operating Systems (OS) running simultaneously on single hardware where each OS is a virtual instance isolated from other. These OSs are termed as virtual machines (VM) which can be stored as an instance for backup, used for software testing in various environments, migrated to another location altogether.

Example: An enterprise server OS crashes along with the hardware. As the OS was a VM, the system administrator of the enterprise easily picks-up its backup VM (VMs are stored as image files — a snapshot of the original instance) and deploys it on other working hardware in minutes.

VM creates a whole OS. But we can also create virtualised application packages that can run on different platforms same as a VM does irrespective of platform, using ‘Docker and Containers’.

What is Docker?

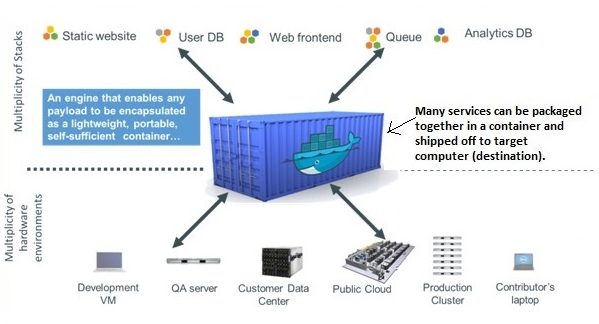

Docker is an open source ecosystem of tools to create, manage and deploy applications for any platform. The general idea is to create applications, package them together into containers and ship them for deployment. Docker is a virtualisation technology. It allows applications to use the OS of the computer on which it is deployed (Client) and ships only that parts of the application (that is, the libraries, dependencies, supporting applications, etc.) that are not available on the Client. With the help of docker, we create containers containing applications that are consistent irrespective of the platform. Docker is available for the Linux, Windows and Mac environment.

What is a Container?

A Container is a bundle of applications with all its dependencies that remain isolated from the guest OS (Client), on which they run. A software developer can package an application and all its components into a container and distribute it for use. The containers created by the docker are called Docker-files. They can be downloaded and executed on any computer (Physical or VM) because it will use the resources of the guest OS for execution, as explained in the previous point. Containers are very similar to VMs. Open Source tools like Kubernetes, Ansible and OpenShift can be used for efficient management of containers.

How is a Container different from a VM?

Containers and VMs both use the concept of virtualisation. Though containers work like VMs, they differ in their capacity. A VM creates a complete OS but containers create only specific components on a guest computer, which are needed to operate. Unlike VMs, Containers do not need hypervisors and hence, we can rid of installing the useless components of VM. Containers have a small footprint on the system than a complete VM.

How does the Docker work?

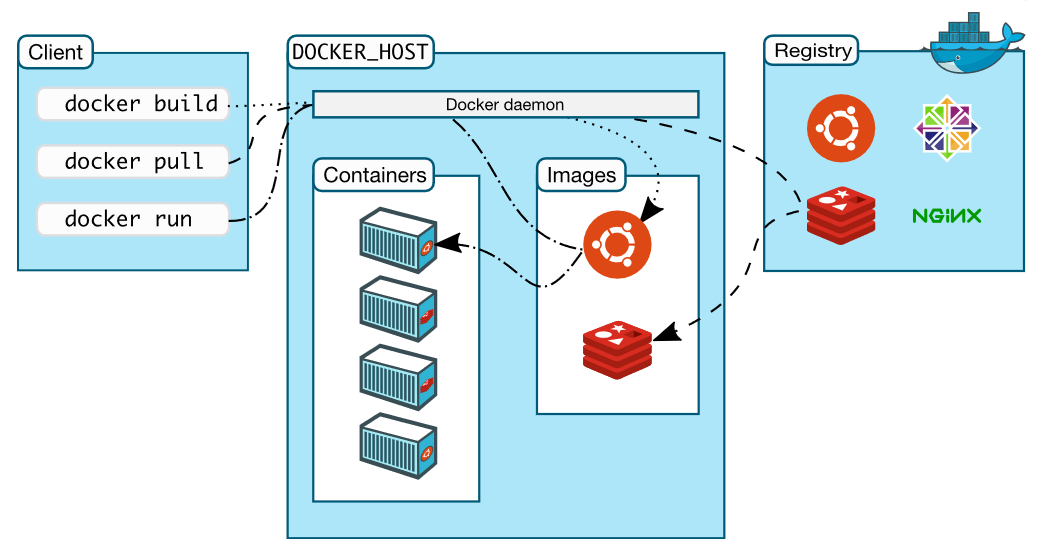

The Docker has a client-server architecture.

Docker client communicates through REST API with the docker daemon which is available on the same machine or can be on a remote machine.

- The Daemon (dockerd) is responsible for distributing, building and running the containers. It listens to the requests by the client. It manages objects such as images, containers, networks and volumes.

- The Client (docker) is the docker user. A client can communicate with multiple docker daemons.

- A Docker Registry stores images of applications. Docker store allows you to buy and sell Docker images or distribute them for free. There are public registries available or you can even create a private registry.

Which are the other components of a docker?

The various components that make up a docker ecosystem are:

- Docker Engine: It is an application for creating images and containers. It consists of a server (the daemon), REST API and the Command Line interface.

- Docker Hub: It is a public registry that hosts Docker images. It is a marketplace like Google Play which hosts all android applications – Like you can download android applications from here.

- Docker Compose: It is used to run multiple containers as a single service. Example: If an application requires multiple services to run, say SMTP and MySQL, you can create only one file for starting both the containers simultaneously without the need to start each one separately.

- Docker Storage: It offers various Storage drivers to works on underlying storage devices.

- Docker Cloud: It is a service where you can connect docker to another cloud environment to use containers, deploy and scale containers and automate them.

- Docker SWARM mode: It supports cluster load balancing for Docker. In SWARM mode, multiple Docker host resources are pooled together to act as one. This enables the users to quickly scale up their deployments and even balance the load.

What are the advantages of docker containers?

- Fast delivery of service: Containers enable to fasten the development, testing and deployment process of an application. They reduce the size of your applications which can be transferred on networks with low bandwidth. Example: A developer writes a code locally and shares it with colleagues on the network through containers. These containers are tested for, without worrying about the platform or waiting for a compatible one. They can collaborate seamlessly, reducing the time required to deliver a service.

- Consistency: The packaged application performance is consistent on machines with different platforms. This frees the developer from worrying about compatibility issues and focus totally on the development. Example: An application developed on Ubuntu will perform in the same way on other computers with RHEL, as it does on Ubuntu.

- Scalability: Containers are portable. They are just to be migrated from one system to another like a VM. This makes them highly scalable so that you can deploy new applications or kill the old ones instantly.

- Smaller footprint and more workload can be put on the same hardware: Docker allows for containers which are very lightweight and has a low footprint, unlike a VM which reserves all the resources it has been granted. This enables to use more number of containers simultaneously on a system.

- Security: Containers provide a considerable level of security to its applications as they remain isolated from each other on the guest OS. They are not likely to be affected by the other vulnerable applications.

- Docker containers can be deployed on any physical machine, VM: Docker is a very useful technology that can be implemented in a cloud to enhance its performance.

- Cost efficient: Docker is open source. We need not buy a complete VM to run just a few applications. You can simply choose to use only those containers containing applications that you need. This saves the unnecessary cost of not-so-useful components and drastically reduces the expenditure.

Is containerisation of Docker, a unique concept?

Apparently No. There are services on the web (Software as a Service) that work like a container and were available long before since the release of Docker. Example: Web services like Youtube, Google Drive and others, irrespective of the platform you are running on, these services pack the required components together to run efficiently on your computer system. Although the open-source Docker is widely used and dominates the containerisation market, there are alternatives to it like the proprietary VMware vAPP, Opscode Chef, Canonical LXD, Virtuozzo, etc.

- Apache versus NGINX – Which Open Source Web Server Software you should choose - April 30, 2018

- Understanding OpenStack - April 30, 2018

- Here is everything you need to know about BlockChain - April 17, 2018