Data Replication or Data Back-Up – Recognize What You Need!

The usage of a back-up strategy by businesses is growing organically. Thinking about early days, manually copying the essential files to a detachable media was a hectic task to the system administrator. In earlier times, a stack of floppy disks would have been sufficient, but as time passed, the storage capacity increased, and the detachable media also expanded its range. Then we saw tapes and hard drives of much higher capacity.

The Decades When Point-in-Time Backups Were Enough

Since the volume of the data back then was comparatively small, this method was enough. A single copy of the complete data, taken at the time of significant data operations was sufficient then.

Later, the dropping costs and rising revolution in the technical upgradation lead to the need for an increased understanding of data importance. This growing knowledge about the importance of data created some problems too, although. The expanding amount of data stored could not be quickly transferred to any external media when any back-up source had to be created in an overnight.

And, obviously revealed a further issue with the traditional back-up techniques, which was to recover the lost data in minimal time from the back-up source. It usually takes three hours to retrieve the data after a disaster. More significant back-ups were possible to put into multiple devices, but the gathering of those to recover a full system was a strenuous task, and hence it took days! So, it was inevitable that your business could suffer for days before it runs with a full fledge.

Finding a Subordinate Data Centre for Replication

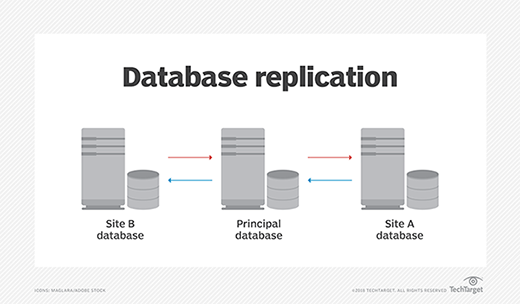

The enterprises needed secondary/subordinate data centres in such a time. With replication in nearly real-time, the process of remote data synchronisation became smoother. This replication could come up when there was an extreme need for failover in case of any disaster.

So, at the beginning of the replication era, it was a costly technology as you had to purchase a second set of complete infrastructure for central systems and hence only bigger companies could afford it. Moreover, the replication was also done in batches just like traditional back-ups, which could provide the needed copy (point-in-time) at the remote location. When the failure occurred, there was always a high possibility of losing some snapshots.

Even though with disadvantages, doing early replication was a crucial step and the best option available then. This option could bring the mission-critical data back online in some minutes and reduce the damage by offering quick business continuity.

Cloud-Based Replication – The Tomorrow is Here

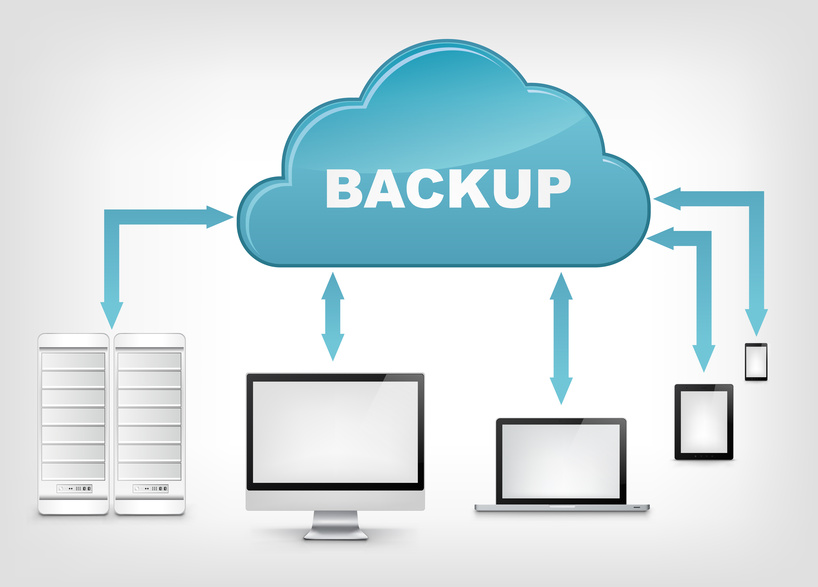

Several technological advancements caused to start replication for all business types. We now have high-speed and low-cost data and immense scalability and flexibility of the cloud which has shattered many barriers. Due to this progress, organisations can save a lot, and they don’t have to build a subsidiary data centre with more expenses.

Current data replication techniques are having a big range of alternatives for all types of businesses. There is an option to choose the entire physical server with its components or just the needed ones from the VM. You get the cloud services or a combination of several services with significant control of choosing the replication destination as per your necessities.

You can also fine-tune and control the data-recovery operations with the use of cloud-based replication. The correct cloud service provider will let you define the parameters for disaster recovery, like RTOs and RPOs. Plus, he will also ask you the resource requirements like memory allocation and processor configuration according to your budget. You shall even get the SLAs defined by the organisation (CSP) so that you can assess their reliability. You can synchronise a significant amount of data in real time and at meagre costs.

The Last Lesson

Even though data replication is available in abundance, it is only one part of a complete disaster recovery solution package. You will have to ensure that the replicated information is correct and the system can get active perfectly when your website gets disconnected. You will also have to see if you get the testing facility before deploying the replication systems. The tests need to be performed regularly so that you don’t be unlucky when the disaster occurs and you get your data back as it was. This step will heighten the scale of your business continuity.

You can know more about the disaster recovery from our earlier blogs. If you want to get the benefit of the disaster recovery services, then please check this.

- Achieving Secure, Reliable Compliance with India’s Data Sovereignty Mandates - November 17, 2025

- Implementing GPU workloads in critical government application - November 12, 2025

- Why the BFSI Industry Needs GPUaaS Now - October 31, 2025