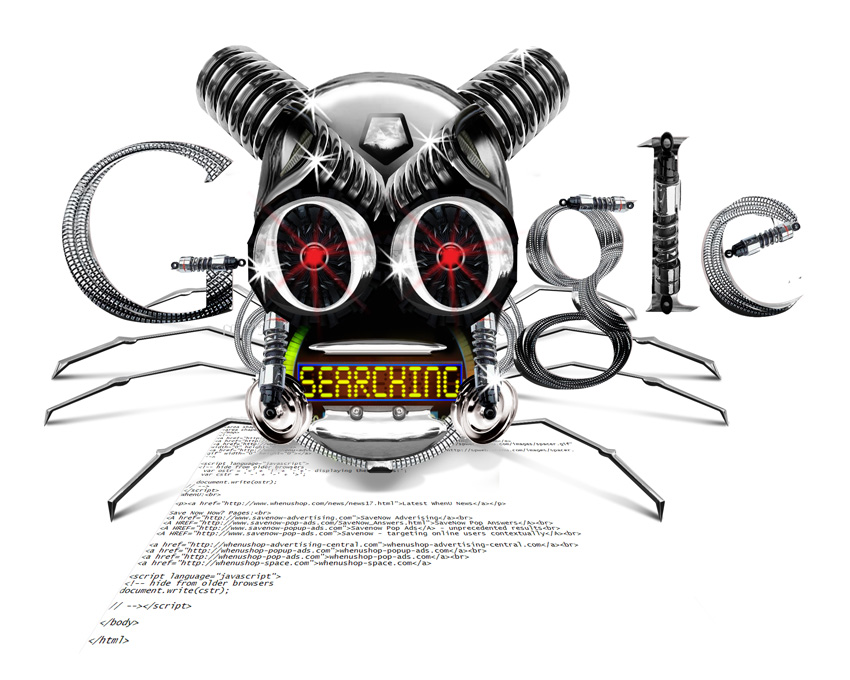

What Are The Famous Robots Of Google?

The robots, crawlers or spiders are small programs sent by Google to investigate, analyze and scan millions of web pages, and are usually one or the other, linked through the links that pages offer.

The robots, crawlers or spiders are small programs sent by Google to investigate, analyze and scan millions of web pages, and are usually one or the other, linked through the links that pages offer.

What Does This Mean?

More all, ranging from the network for documents, once the first one, continuing their search and indexing the documents referred to in the first found ..

What Are They Used For?

- Used for Indexing

- To validate HTML

- Validation of links

- The monitoring of new or “New Add”

- And for the mirroring.

Are They Poor Little Buggers?

Not really, but keep in mind that these robots are programmed by humans, and humans usually make many mistakes. So to make programming, the people in charge of the robots must be very careful and the authors of the robots must be programmed so that it is difficult for people to make mistakes with serious consequences. However, in general, most of robots are quite responsible and intelligently designed, do not cause major problems and provide a very valuable service, otherwise would be too coarse. As we say, the robots are not bad nor good, just have to provide appropriate care they need.

Where Do I Start?

Usually start with a fixed database of addresses and then start to expand, based on the references. Some search engines offer a section where you can send your site for them to send a small robot to index it and add it to their database.

How To Lead And Command The Robots To Index Or Not?

It means, one can restrict certain files, the activity of the robots, as an administrator of a site may sometimes want a robot to do it to appear in search engines, or maybe not, or sometimes prefer that some content is not indexed or for example only certain search engines to index us, the variants are based on what we want and are likely quite large. The famous robots.txt file then comes into play. This file should be placed in the root of our dedicated servers as when a robot reaches our dedicated server, usually looks for this file to see what restrictions we have given and how to act. Let’s say we have the relevant orders to let it work freely at our disposal or depends on what we leave scheduled so that the robot know exactly what to do.

- How Cloud Computing Is Changing The Labor Market - March 25, 2015

- Adopting Infrastructure as a Service Can be a Good Deal - March 17, 2015

- Will Virtualize? Take These Six Points Into Consideration - March 12, 2015

"The famous robots.exe file…" – Shouldn't that be "robots.txt"???

Yes John.. It is corrected..

Thanks..