Apple vs. Microsoft: The First Versions Of Windows and macOS

In 1983 Apple introduced a great innovation, the Lisa, which used a graphical interface quite elaborate and had a suite of office applications to the Office there. The interface worked well, applications ran with surprising performance and configuration was far superior to the PCs of the time. The problem was the price: $ 10,000 at the time:

Although there was nothing better in the market, Lisa ended up not reaching the expected success. In total, they produced about 100,000 units in two years, but most of them were sold at deep discounts, often below cost price. Because Apple has invested approximately $ 150 million in the development of the Lisa, the account ended up in the red.

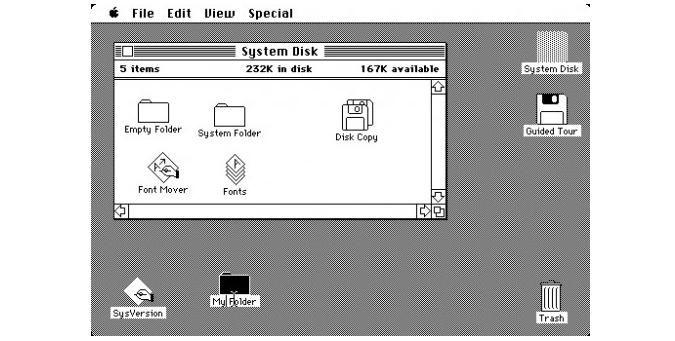

Nevertheless, the development of the Lisa was the basis for the Macintosh, a simple computer, released in 1984. Unlike Lisa, it made a great success, even threatening the empire of the PC setup as it was similar to the PCs of the era with an 8 MHz processor, 128 MB of memory, and a 9-inch monitor. The big gun was the Macintosh MacOS 1.0 (derived from the Lisa operating system, but optimized to consume much less memory), an innovative system from various points of view.

Unlike MS-DOS it was entirely based on the use of the graphical interface and mouse, which made it much easier to operate. MacOS continued to evolve and incorporate new features, but always keeping the same idea of user-friendly interface.

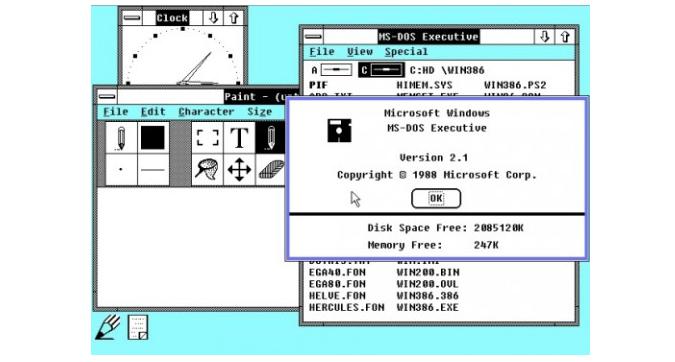

During this same period, Microsoft had developed the first version of Windows that was announced in November 1983. Unlike the macOS, Windows 1.0 was a rather primitive interface, which did little success. It ran on MS-DOS and Windows applications could run as much as the programs for MS-DOS. The problem was the memory.

The PCs of the era came with very small amounts of RAM and at that time there was still no possibility to use virtual memory (which would be supported only from the 386 ). To run Windows, it was necessary first to load MS-DOS. The two together have consumed virtually all memory of a PC base of the season. Even the more bulky PCs could not run many applications at once, again for lack of memory.

As Windows applications were very rare at the time, few users saw the need to use Windows to run the same applications that were running (with more available memory …) in MS-DOS. Not to mention that the initial release of Windows was very slow and had too many bugs.

Windows started to make some success in version 2.1 when the computers with a 286 MB or more of memory were common. With a more powerful configuration, more memory, and more applications, it finally began to make sense to run Windows. The system still had many problems and crashed often, but some users began to migrate to it. The Windows 2.1 version also won one for the PCs with 386 processors, with support for memory protection and use of virtual 8086 modes to run applications in MS-DOS:

Windows even spawned from version 3.11 (for Workgroups), which was also the first version of Windows that supports network shares. It was relatively mild in terms of PCs of the time (the 386 with 4 or 8 MB of RAM were common), and supported the use of swap memory, allowing multiple for applications to open, even if the RAM runs out. At the time the application base for Windows was already much higher (the titles were used more as Word and Excel, not much different from what we have on many desktops today) and there was always the option to return to MS-DOS when something went wrong.

At this time the PCs began to regain ground lost to Apple Macintosh’s. Although it was unstable, Windows 3.11 had a large number of applications, and PCs were cheaper than Macs.

Strange as it may seem, Windows 3.11 was still a 16-bit system, which operated more like a GUI for MS-DOS rather than as an operating system itself. By connecting the PC, MS-DOS was loaded and you had to type “win” at the prompt to load Windows. Of course, you could set your PC to boot Windows by default, adding the command to autoexec.bat, but many (possibly most) preferred to call it manually, to get how to fix things by DOS if Windows passed to curb during charging.

In August 1995 it launched Windows 1995, which marked the transition from platform to 32 bits. Besides all the interface improvements and the new API, Windows 1995 brought two important changes that were supported preemptive multitasking and memory protection.

Windows 3.11 was using a primitive type of multitasking, called cooperative multitasking. In it, no memory protection was used, there was no real multitasking, and all applications had full access to all system resources. The idea was that each application uses the processor for some time, then passes to another program and waits for your turn to come again to run over a handful of operations.

This anarchic system gave room for all sorts of problems since applications could monopolize processor resources and end up halting the system completely in case of problems. Another big problem was that, without the protection of memory, applications could invade areas occupied by other memory, causing a GPF (General Protection Fault “), the general error that produced the famous Windows blue screen.

The memory protection is to isolate the area of memory used by applications, preventing them from accessing areas of memory used by others. Beyond the question of stability, this is also a basic function of security, since it prevents malicious applications that have easy access to data handled by other applications.

Although it was supported from the 386, the memory protection was now only supported from Windows 95, which also introduced the already mentioned preemptive multitasking, isolating the memory areas occupied by applications and managing the use of system resources.

The big problem was that, although it was a 32-bit system, Windows 95 still had many 16-bit components and maintained compatibility with 16-bit applications of Windows 3.11. While they only used 32-bit applications, things worked fairly well, but when a 16-bit application was executed, the system returned to use cooperative multitasking, where it found the same problems.

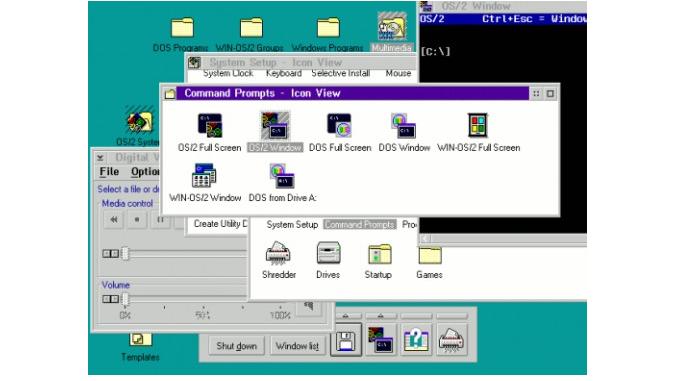

At the time, Windows was facing competition from OS / 2 from IBM, which glowed like a more robust option, especially for the business audience:

OS / 2 arose from a partnership between IBM and Microsoft to develop a new operating system for the PC platform, capable of succeeding in the MS-DOS (and early versions of Windows, which ran over it). Initially, the OS / 2 was developed to take advantage of features introduced by the 286 (new instructions, supporting 16 MB of memory, etc.). But it was quickly rewritten as a 32-bit operating system, able to enjoy all the features of 386 onwards.

However, the success of Windows 3.x that Microsoft made to change its mind, going to see the Windows (not OS / 2) as the future of the PC platform. Tensions went on increasing, until in 1991 the partnership was broken, which led to Microsoft and IBM becoming competitors. IBM continued to invest in the development of OS / 2, while Microsoft used the code developed to initiate the development of Windows NT that was developed in parallel to Windows 95.

Although OS / 2 was technically superior to Windows 95, Microsoft which was just getting better, because Windows 95 was easier to use and had the users’ familiarity with Windows 3.11, while IBM skidded on a combination of lack of investment, lack of support for developers and lack of marketing.

Thanks to the terms of the previous agreement, IBM was able to include support for Windows applications on OS / 2. However, the strategy backfired, and it discouraged further development of native applications for OS / 2, so it ended up competing with Windows on its own territory. To run Windows applications inside of OS / 2 was more problematic and the performance was lower, causing more and more users to prefer to use Windows directly.

Although it is officially dead, OS / 2 is still used by some companies and some groups of enthusiasts. Serenity in 2005 bought the rights to the system, giving rise to eComStation.

A much more successful system that began to be developed in the early 90’s- Linux, we all already know. Linux has the advantage of being an open system, which currently has the support of thousands of volunteers and developers around the globe and the support of companies of weight, such as IBM, HP, Oracle, and virtually every other major tech company with the exception of Microsoft and Apple.

Nevertheless, in the beginning, the system was much more complicated than the current distributions and did not have the lush graphical interfaces that we have today. While Linux is strong on dedicated servers since the late 90s, using the system on desktops is growing at a slower pace.

Back to the history of Windows, with the release of NT, Microsoft has to maintain two separate development branches, one of them with Windows 95, for the consumer market and the other with Windows NT, for the corporate audience.

The tree originated from Windows 95 to Windows 98, 98SE, and ME, that despite the progress in relation to the API and applications, have retained the fundamental problems in Windows 1995 for stability. Another major problem is that these versions of Windows were developed without a security model in an era in which the files were exchanged via diskettes and the machines were accessible only locally. This allowed the system to use and swirled with a good performance on the machines of the time, but started to become a heavy burden as access to the Web became popular and the system started to be targeted by all sorts of attacks.

The tree of Windows NT on the other hand had much more successful in creating a modern operating system that was much more stable and with a more solid foundation from the point of view of safety. Windows NT 4 gave rise to Windows 2000, which in turn was used as the foundation for Windows XP and Windows Server 2003, kicking off the current line.

Apple, in turn, has undergone two major revolutions. The first was the migration from old MacOS to OS X, which, below the polished interface is a Unix system, derived from BSD. The second happened in 2005 when Apple announced the migration of its entire line of desktops and notebooks to Intel processors, which allowed it to benefit from cost reduction in processors and other components for personal computers PCs, but at the same time retain the main difference of the platform, which is the software:

From the viewpoint of hardware, Macs today are not much different from PCs, you can even run Windows and Linux via Boot Camp. However, only Macs are capable of running Mac OS X, due to the use of EFI (which replaces the system BIOS as the bootstrap) and the use of a TPM chip.

Despite the change, Macs remain a closed platform, controlled by Apple, which develops both computers and the operating system. Of course much is outsourced, and many companies develop software and accessories, but as Apple has to keep track of everything and develop a lot on their own, the cost of the Mac ends up being higher than that of PCs.

In the early 80s, the competition was fierce, and many felt that the Apple model would prevail, but that’s not what happened. Within the history of computing, we have many stories that show that open standards almost always prevail. An environment where there are several companies competing with one another promotes the development of better products, which creates greater demand and, because of economies of scale, allows lower prices.

As the computers have a PC open architecture, several different manufacturers can participate and develop their own components based on standards already set. There is a huge list of components compatible with each other, which allows us to choose the best options among various brands and models of components.

Any new manufacturer with a motherboard cheaper or faster processor, for example, can enter the market, it’s just a matter of creating the necessary demand. The competition makes it that manufacturers are obliged to work with a relatively low-profit margin, winning based on the volume of things sold, which is very good for us that we buy.

- How Cloud Computing Is Changing The Labor Market - March 25, 2015

- Adopting Infrastructure as a Service Can be a Good Deal - March 17, 2015

- Will Virtualize? Take These Six Points Into Consideration - March 12, 2015