The Rise of GPUs: The New Backbone of AI Development

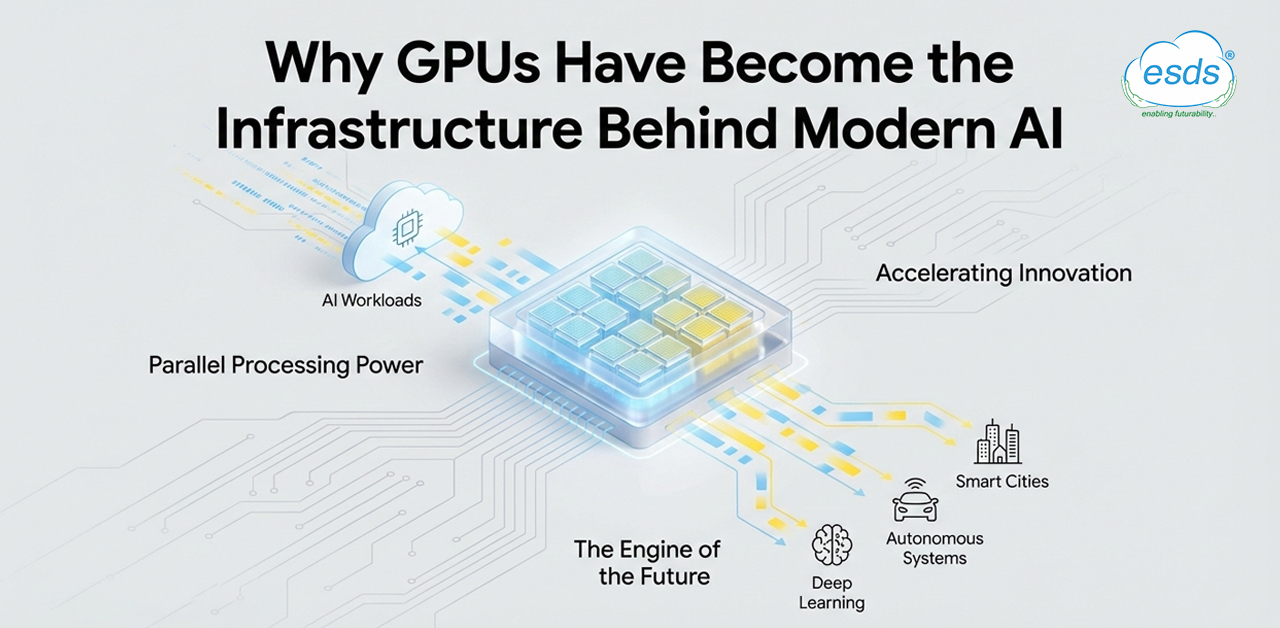

For years, AI professionals measured the success of the domain in terms of better algorithms, larger datasets and more capable models. Compute was secondary. Today, large-scale computing power is a primary detail, especially for organizations that train and deploy AI models. GPUs were once treated as add?ons rather than the core engines behind AI. But today, they are a primary resource behind modern AI. GPUs determine how fast models can be trained, how large they can grow and who can build them. Compute was an enabler, but now it is a constraint.

Organizations are coming up with newer AI models, but the supply of GPUs is limited. One must track the investment pattern and the volume of investment by large corporations in AI infrastructure. A Goldman Sachs report notes that hyperscale cloud providers plan to spend over $600 billion on capital investments in 2026. About 3/4 of that will go into AI infrastructure like GPUs, servers and data centers (Source: Goldman Sachs). This reflects the significance of computing in building and advancing in the domain of AI.

1. From Breakthroughs to Bottlenecks

In the early 2010s, new ideas emerged in the designing of the models. Algorithms improved, datasets grew and performance rose. Compute always existed, but it wasn’t the limiting factor. Over time, models grew and training demands rose and this balance has now changed. Infrastructure has not been able to keep up. The main bottleneck in AI today is the access to sufficient computing power. (Source: Our World in Data).

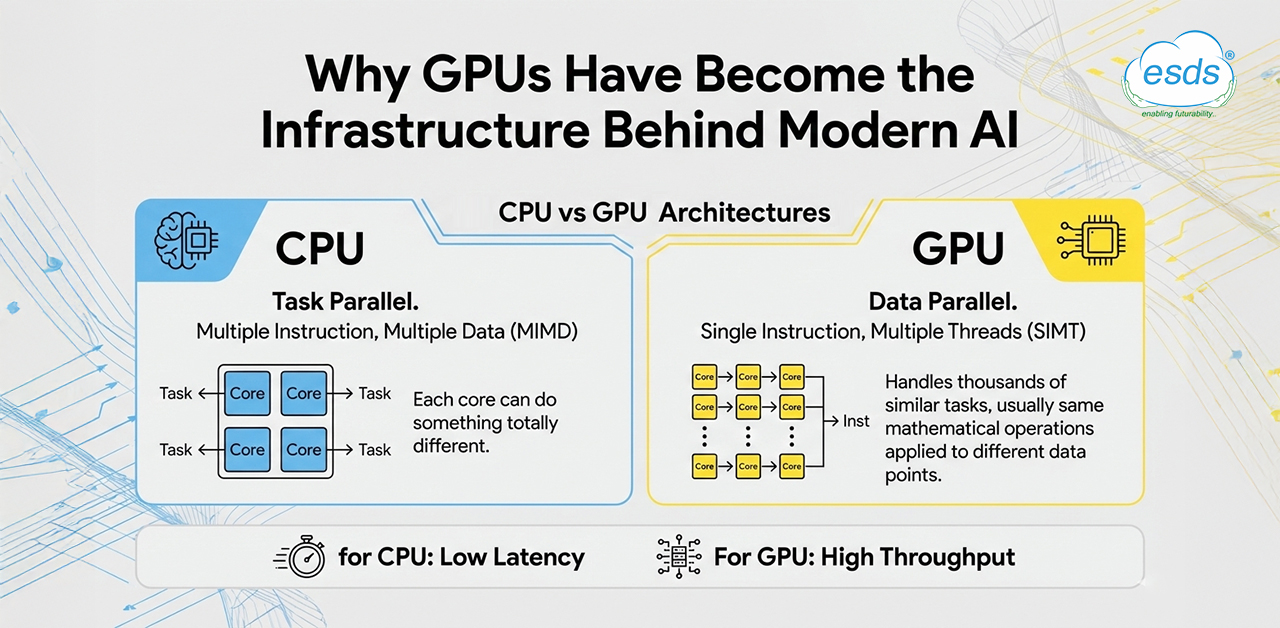

2. Why GPUs, Not CPUs, Power AI

Organizations have shifted from CPUs to GPUs for training and deploying purposes. AI models and neural networks require repetitive work, like doing the same mathematical operations on vast data again and again. For this, hardware should support massive parallelism, high throughput and efficiency over the entire duration. GPUs are built for these demands. CPUs, on the other hand, are designed for sequential and general?purpose tasks. Therefore, GPUs have become the primary drivers of modern AI systems. (Source: IBM)

3. When Compute Becomes a Resource, not a Tool

When models are trained round the clock, compute starts becoming scarce. Training, inference, monitoring and retraining need uninterrupted access to compute to function smoothly. To make the most out of it, teams prioritize the usage of compute. Hence, compute is termed as the new oil. In the era of the industrial revolution, oil determined industrial growth. Today, compute determines the scale of AI.

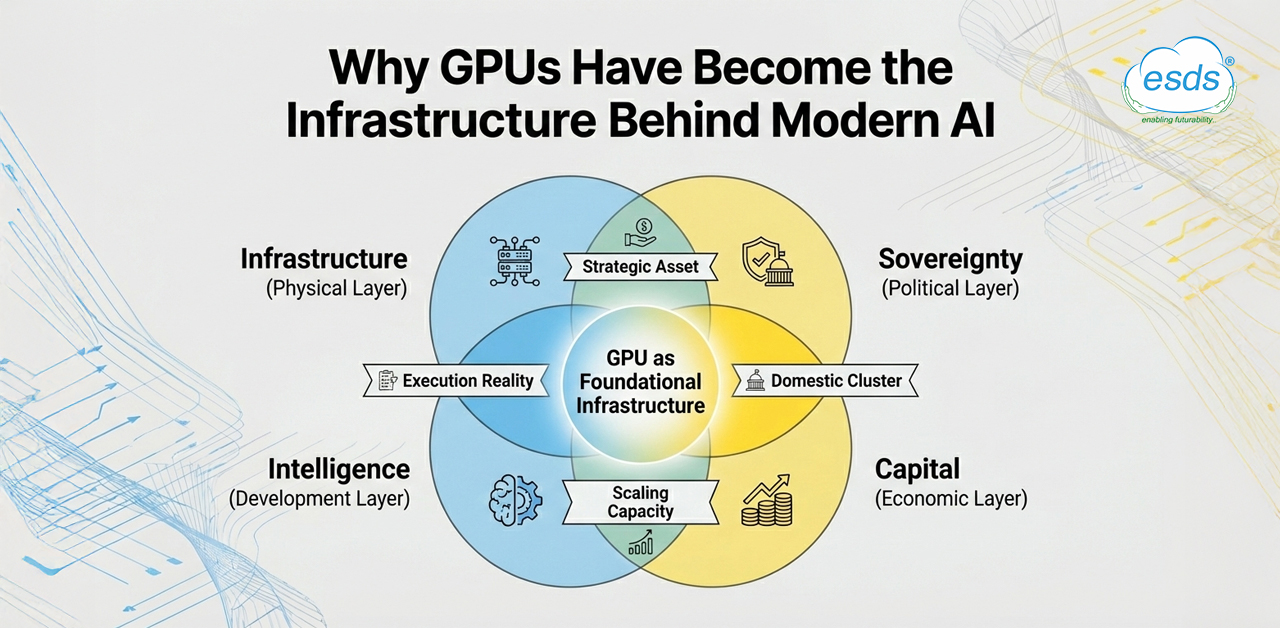

4. Control Exists in Layers, Not Absolutes

No single actor has control over compute. There are a handful of organizations that design chips, manufacture hardware, build systems and develop software. There are few countries that have access to the raw material needed to manufacture semiconductors. Together, these layers determine performance, availability and scale even before models are trained or deployed.

5. Cloud Providers and the Quiet Control of GPUs

As AI systems scale, teams focus on maximizing GPU and securing more capacity when they need it. During peak load, availability of GPU matters more, even more than the exact hardware needed. Organizations have long?term agreements with service providers, but still access depends on quotas, region limits and scheduling. Cloud providers decide when GPU capacity opens up, which ultimately decides whether progress will be slower or faster. (Source: Nosana).

6. When GPUs Stop Being Just Hardware

If a component like a GPU becomes a deciding factor in the scaling of AI, it becomes a strategic component, not an ordinary one. Beneath commercial platforms now sits GPU, which decides progress in academics, research, defence, technology, innovation, economic activity, almost everything. (Source: European Student Think Tank). Restrictions on certain export material and/or infrastructure make this shift visible. They are placed by countries or blocs to shape which regions can progress in AI, more than talent or data alone. Governments are investing in domestic compute infrastructure so as to reduce dependency on fragile external supply. Therefore, GPUs move into the category of critical infrastructure, where stability of access matters as much as performance. (Source: ESDS Blogs).

7. What Scarcity Changes for People Building AI

People and organizations working in the domain of AI know how GPU scarcity feels and affects.

| Who | How GPU Scarcity Affects Them |

| Hyperscalers | They plan long-term GPU supply through multi-year contracts. But they may face long lead times (about 36 to 52 weeks) due to HBM and packaging limits. [clarifai.com] |

| Cloud Providers | They plan for regional expansion. But due to scarcity, they must manage customer demand using quotas, reservation windows and region caps. [windowsforum.com] |

| Enterprises | They plan AI roadmaps around capacity and cost. But scarcity delays deployments and increases reliance on cloud allocation cycles. |

| Startups | They plan to build competitive models. But scarcity forces them to use distillation, quantization and smaller architectures to stay viable. |

| Developers / Engineers | They plan to improve model quality. But scarcity shifts their focus to throughput, memory use and efficiency as the main skill signals. |

| Researchers | They plan experiments freely. But scarcity limits iteration speed because cluster queues and GPU hours dictate what can be tested. |

8. Can GPU Power Ever Be Widely Shared?

Open models have made it possible to access a wide range of AI capabilities. But there is no shift of control of the infrastructure beneath them. Now, source codes travel easily, but the GPU access does not. Training, hosting and serving large systems are still dependent on a concentrated pool of hardware that only a few providers operate. There are efforts to loosen this grip. To reduce pressure, alternate hardware and efficient methods are used, but they still operate alongside GPUs. The core dependency remains unchanged. In this scenario, the meaning of democratization is different altogether. Therefore, universal access to GPUs is not the goal; the focus is on making participation affordable inside this constrained system.

Conclusion

The shift in how AI is being trained, deployed and scaled reflects a deeper change in the industry’s foundation. GPUs were treated as specialized add?ons for many years, but today, they sit at the center of the development of modern AI systems. They form an enabling layer on which current models and research efforts depend.

As this hardware has become strategic, expensive and harder to secure, the nature of progress has changed. Innovation now needs ideas as well as access to enough computing power to put those ideas into practice. Creativity matters too, but even strong concepts now depend on substantial infrastructure to move forward. As AI adoption continues to expand, the central question is shifting from what can be built to what can be run at scale. The focus is moving away from where intelligence is designed and toward where it can be executed and sustained.

- The Rise of GPUs: The New Backbone of AI Development - March 2, 2026

- AI in India: A Silent Revolution with Big Impact - February 23, 2026